Almost Timely News: 🗞️ Advancing Up the 5 Levels of AI (2026-04-19)

Almost Timely News: 🗞️ Advancing Up the 5 Levels of AI (2026-04-19)Don't reach for something that isn't there

Almost Timely News: 🗞️ Advancing Up the 5 Levels of AI (2026-04-19) :: View in Browser The Big PlugsSo many new things! 3️⃣ A free 25 minute webinar Katie and I did on GEO - even though it says the date is past, it still works and takes you to the recording. Content Authenticity Statement100% of this week’s newsletter content was originated by me, the human. You’ll see me working with Gemini and Claude in the video version. Learn why this kind of disclosure is a good idea and might be required for anyone doing business in any capacity with the EU in the near future. Watch This Newsletter On YouTube 📺

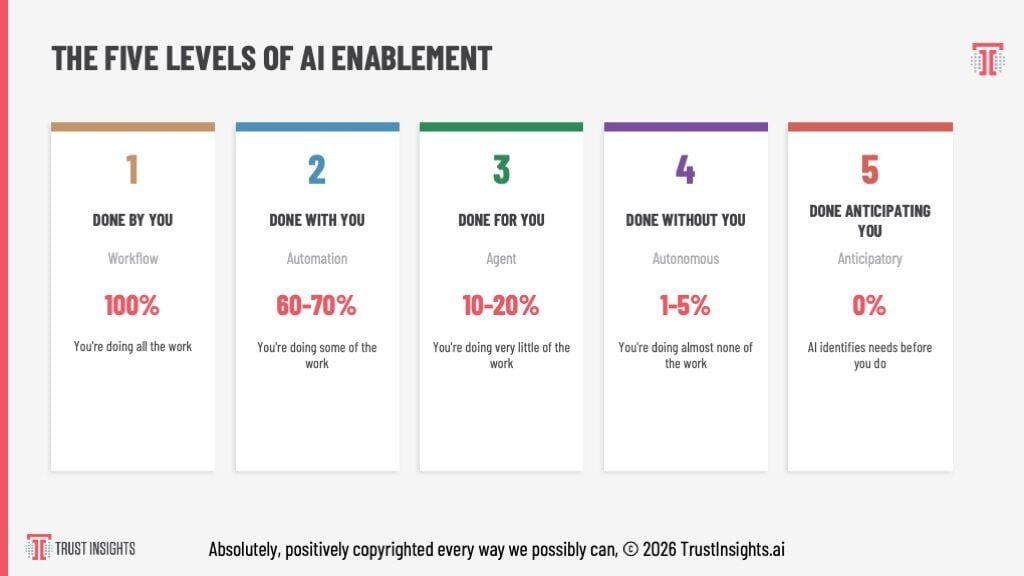

Click here for the video 📺 version of this newsletter on YouTube » Click here for an MP3 audio 🎧 only version » What’s On My Mind: Advancing Up the 5 Levels of AILast month, I shared with you my new framework for understanding where we are as individuals and organizations when it came to AI enablement - what we can be doing with AI. If you missed that issue, you can find it here. This has formed the basis of my recent keynotes, and this week, I’ll take you through how you bring it to life. Frameworks are great, but frameworks that contain clear, prescriptive actions are even better. To get where we want to go, we should think about where we’ve been. Part 1: A Brief, Incomplete History of AIAI has been around in some form since the 1950s, though only in the last 40 years have we had enough computational power to make it useful. Prior to 2017, most AI was what we now call classical AI or machine learning - lots and lots of math and probability in two main categories, regression and classification. Regression is, given an outcome, either predicting more of that outcome or explaining how we got to that outcome. If you’ve ever done lead scoring or attribution analysis, that’s regression analysis. Classification is organizing your data. Anyone who’s used email spam filters since 1999 has used classification AI in some capacity, even if you didn’t know it. The challenge with these two branches of AI is that the mechanisms to make them work required a tremendous amount of code and statistics. If you didn’t know R or Python or Scala or Julia, your ability to derive significant benefit from them was low. In 2017, Google DeepMind created an architecture called transformers - no relationship to the 1980s toy line, unfortunately - that was intended as translation software for language. Given enough data to train on and a novel mechanism that took into account all the data in an input, and recursively processed that data with every new input, the transformers architecture achieved totally new capabilities. Fast forward 5 years, and OpenAI released a very powerful (at the time) model called GPT-3.5 and a web interface to it, ChatGPT, starting what we now know as the generative AI revolution. GPT-3.5 was reasonably powerful, with a short term memory of 8192 tokens, or roughly about 5,000 words; users could interact with it and generate outputs up to 3,000 words. But very quickly, folks realized that starting a new conversation with it meant literally starting over. It remembered nothing, and its background knowledge - 175 billion parameters - contained a very incomplete knowledge of the world. More important, its memory was probability-based. It returned answers that matched high probabilities, not factual correctness. Back then, as late as 2021 and early 2022, you could ask a GPT-series model a question like “Who was president of the United States in 1492” and it would answer - quite confidently - that it was Christopher Columbus. Why did it do that? Because when it breaks down that question into probabilities, it looks at the probabilities of different pieces in it, such as the United States as an entity, the president being an important role, 1492 being an important date, and probabilistically, the name Christopher Columbus is the highest probability answer, even though it’s factually wrong. Christopher Columbus as a name is associated with America and with Fourteen Ninety Two from a probability perspective. Those two missing features - a relatively small short term memory (what we call the context window) and a probability-based long term memory - are what severely limited the usefulness of generative AI in its early days. Tech companies quickly realized that if AI models continued being solely probability-based, they would never be broadly adopted, so virtually all of them adopted a fact-checking system grounded in web search, which gave birth to what we now called GEO/AEO/AIO/etc. AI models by default now in consumer-facing interfaces use web search to validate what they’re doing and what answers they give. Those limitations - no persistent memory, a temporary short term memory, and a long term memory based on probability combined with web search - are where most consumer-facing AI systems are today. Whether it’s ChatGPT, Copilot, Gemini, Claude, etc., the basic features are all the same, which brings us to the Five Levels. Part 2: A Very Fast Recap of the Five LevelsIf you skipped the previous issue of the newsletter that talked about the five levels in depth, here is the TLDR version, based on an old product-market fit framework:

If the five levels were in the context of food, then level 1 would be you cooking a meal at home. Level 2 would be you cooking from a meal kit where a lot of the prep is done for you, but you still have to do the final cooking. Level 3 would be you going out for dinner. Level 4 would be a personal chef that cooks for you in your home. Level 5 would be a live-in chef who preps meals without even being asked. Level 1 is chat - you have conversations with AI, you prompt it, you get answers from it. It’s what we all first experienced as early as July of 2021 with Eleuther.ai’s GPT-J-6B model (the first one that was really usable by the end user). If you’ve been hanging out with me and the Trust Insights team for a while, you’ll remember our early courses and lessons on prompting, and those lessons are still valid and useful today, but they’re the first rung on the ladder now in AI, not the top. To better understand the levels, let’s spend some time with the inputs and outputs. At Level 1, the inputs are text, whether it’s things you type, things you attach, etc. The output of AI tools is also text. Whether it’s simple chat or documents inside the web interface, it’s still fundamentally a pile of text that you, the user, have to do something with. You’re doing all the work - prompting, then taking the results and copy pasting them somewhere else. To be sure, prompting is a vital skill, and knowing how to prompt well is what separates good results from bad. But folks got tired of copy pasting literally everything and asked for ways to pre-prompt AI tools. That leads us to Level 2, the mini-app automations. These are features like GPTs, Gemini Gems, Claude Projects, etc. where we have prompts at Level 1 that we have to copy and paste ourselves, mini-apps like Gems and GPTs have the prompts and background knowledge baked in. Half the work is pre-set. When you use a GPT or a Gem, you don’t have to provide the majority of the prompt anymore. You provide a much shorter prompt. The system instructions provide the rest and the output is provided as text. You still have to do something with the text, but at least the copying and pasting of the input prompt is dramatically reduced. Level 3 is where we start seeing more autonomy. At level 3, agentic tools like Claude Cowork, Claude Code, OpenWork, Google Antigravity, OpenAI Codex, or the many, many other agentic tools allow us to create more powerful pre-built routines, often in the form of Skills and Plugins. Combined with detailed plans, these tools can take the plan and execute it. At level 3, we also start to see AI models interacting directly with our computers and their file systems. So instead of us having to copy and paste things, it can write its data locally to our computers or through various connectors to output systems. Level 4 is where we see substantially more autonomy. This is with tools like OpenClaw or NemoClaw, Hermes Agent, Claude Managed Agents, and many other systems. At level 4, we give agentic AI clear goals and measures of success, and it figures out how to implement that. It writes its own skills, it improves itself, and it ultimately achieves or doesn’t achieve the goal we set out for it. Level 5 is where agentic AI is given a very high level goal and figures out all the implementation, including its own measures of success and what it needs to do to achieve that success. Level five is also where tools start to anticipate because they have persistent memory. They know, for example, at the end of every month we have to do a report, and instead of waiting for us to ask, it delivers it to us proactively. There aren’t any level 5 systems in existence today that are production ready, but level 4 systems are well on their way to evolving into level 5 systems, and I expect by the end of calendar year 2026 that level 5 systems will be in production, if not sooner. All the components to make a Level 5 system exist today, but they just haven’t been sewn together in an accessible, easy way. Part 3: Why Harnesses Matter as Much as ModelsHow did this transition happen going from simple copying and pasting to having machines that can anticipate and build intelligent outputs for us? The answer is the harness. In AI, the model is essentially the engine of the vehicle. It’s very powerful and it can do a lot of work in different ways, but no one rides down the road sitting on an engine block. We sit inside a car. The harness is to an AI model what the car is to the engine. What’s changed dramatically in the last 12 to 18 months is not only models getting smarter and more capable, but models learning how to do what’s called tool handling, where they’re told that a tool exists and they know what to do with it from basic web search all the way up to complex operations or using command line interfaces. Instead of having to reinvent the wheel and think things through for connecting to a system we care about like our CRM, today’s agentic harnesses have connections to all the systems we care about, and they tell the model, “hey, this CRM is available”, or “this email inbox is available”. The model, knowing that those tools are available, instead of trying to have to write its own code to talk to that system, simply picks up the tool and uses it. The harness is also where we impose lots of guardrails on AI. The reason why we didn’t see tremendous explosions of productivity with level one and level two AI tools, is that chat-based AI interfaces in some ways have overly restrictive guardrails, and in other ways don’t have enough guardrails. The most potent guardrail we can impose on AI is memory itself. Because generative AI is probabilistic in nature, if we don’t provide any form of memory, then it has to use its own internal probabilities to essentially guess at what our intent is and what is and is not allowed. Going back to the example earlier of Christopher Columbus, because there were no guardrails, whatsoever, and no memory, the system generated a pure probability and answered the question incorrectly. On the other hand, if we impose memory structures on AI inside the harness, with things like connections to our inbox, to our CRM, to knowledge blocks that you’ve created, like ideal customer profiles or company about pages, then those effectively create guardrails and containers, limits on what AI should and should not do. The more memory that our harness provides, the better the guardrails on AI and its outputs are. Which brings me to one of the most important points about model capabilities. Every day you read about some new AI company introducing some new model and how it’s the best thing since sliced bread. And part of what creates a sense of overwhelm in AI is all of the announcements that are made, and you and I are trying to figure out which announcements to actually pay attention to, what actually matters. A great harness creates enough guardrails around AI that you can put different types of models inside of it and get reasonably consistent results. This has huge implications for things like cost and sustainability. If we were to put a smaller model inside a very capable harness, it might generate results just as good as putting a large and expensive model in the same harness. Certainly, the large and expensive model will generate results faster, but the quality might be equivalent if our harness is sophisticated enough. Here’s a concrete example. Imagine you’re going to use generative AI to write a fiction novel. If you use a model without a harness around it other than a basic chat interface, you could get wildly different results each time you tried. And those different results might lead you to believe that you need bigger and better models. On the other hand, if inside your harness you have features for things like character cards and world cards and writing styles and all the deterministic requirements, aka things that are provably true and false as part of the harness, then even a less capable model will still generate good results because you’re holding it to very strict standards. Think about the difference between a four-cylinder and an eight-cylinder engine. A V8 engine is incredibly powerful, but there’s only certain vehicles that can handle it. Putting a V8 engine inside a Prius is a terrible idea. However, you could put a four-cylinder engine inside of a well-made car body that can accommodate larger engines. You might not be able to pull as much of a load or go as fast, but you’ll still have a working vehicle. What we got introduced to at level 3 in the five levels is the concept of harnesses. Up until that point, the idea of a model being separate from its interface was relatively foreign. If you used ChatGPT, you used GPTs. If you used Gemini, you used Gems. The model and the harness were one and the same. When you have a tool like Claude Cowork that surrounds a very capable model, it is a great harness around a great engine. What makes Cowork different is that you can start to swap different models in. Now, inside Cowork you can only choose from Anthropics models. However, it’s not the only game in town. There are many different harnesses, and some of them can accommodate hundreds of different types of types of engines. When tools like OpenWork or OpenClaw came out, they offered users the chance to use nearly any engine inside them. The key takeaway here is that the engine and the harness are separate now, and you do not have to spend top dollar on the nicest engine if you have a great harness around it. Part 4: How to Advance Up the LevelsNow let’s get down to brass tacks. Suppose you are still a level one user. You are still prompting ChatGPT or Gemini or Claude every day, and still being the copy-paste monkey. How do you move up the levels? How do you advance your AI capabilities as an individual? Level one inputs are chats. To get to level two with things like GPTs and gems, you have to turn your one-off conversations into standard operating procedures, something that my friend, co-founder and CEO Katie Robbert talks about all the time. Standard operating procedures, or SOPs, are what allows a business to scale. If you’re constantly reinventing the wheel, you’re losing out on productivity. The same thing applies to generative AI. If you are constantly reinventing the wheel in every chat, you’re not going to get scalable value out of AI. So your first order of business to ascend from level 1 to level 2 is to start codifying your most used prompts as standard operating procedures that you can then put into GPTs and Gems and Claude Projects, or whatever the system you’re using calls them. Once you’ve gone through the effort of taking specific prompts and making them more generic so that they can be used as standard operating procedures, then you’re ready to start looking at the move from level 2 to level 3. This is where you’re going to start using agentic systems like Claude Cowork or OpenWork or Google Anti-Gravity or OpenAI Codex. Those standard operating procedures that you built in level 2 are supremely relevant for level 3, but they get put in the context of a project plan. The framework that I recommend the most for working with agentic tools like Claude Cowork is the 5P Framework by Trust Insights™. It is the perfect structure for designing project plans that you would then hand off to an Agentic tool to go and execute. If you sit down and define purpose, people, process, platform, and performance, and you use the standard operating procedures from level 2 in your project plan for level 3, you will get great results because your AI model will not have to reinvent the wheel by trying to design its own processes. Instead, you’ll provide it the standard operating procedures it needs to accomplish its work efficiently. Making the move from level 3 to level 4 is about recognizing that an agentic system like OpenClaw or Claude Managed Agents or Hermes are systems that are effectively employees. You’re no longer delegating a project level directive to the AI agent. Instead, you are giving it a job description. A research agent will have a job description that tells it what good research is, how to do it, and what your overall research goals are, and it will go and implement them and come back to you with the finished product. A coding agent at level 4 is given a job decription, PRD, and spec, and fulfills the entire thing all the way through QA, user acceptance, and deployment, just like a real developer. Think about it. What is a job description except a compilation of projects? What is a project plan, except a compilation of skills? What is a skill, except a compilation of practices that you’ve developed? Each level builds on the previous and creates the precursors for the next level. That brings us to moving up to level 5. Again, level 5 systems do not exist in production today, but it is a very small leap of the imagination to understand what their architecture is likely to be. If a level 4 AI agent is effectively an employee, then a level 5 AI agent system is effectively an agency or a team. There will be a manager agent and then individual contributor agents, and what you’ll create it with is something like a strategic charter. Instead of being a software developer at level 4, in level 5 you might have a software development team, and on that team will be things like project managers and QA testers and coders. If you’re good at writing prompts today, then get good at turning those prompts into standard operating procedures. If you’re good at writing SOPs today, get good at turning those into project plans. If you’re good at writing project plans, get good at turning those into job descriptions. And if you’re good at writing job descriptions, then get good at writing strategic charters. Each level is built on your success in the previous level and composed of the documents from the previous level. A job description should have project plans available as a reference library. A project plan should have standard operating procedures available as a reference library. Standard operating procedures should have key concepts and background information available as a reference library. Part 5: An Example WalkthroughLet’s look at a simple example of how you’d transform from one level to the next. We’ll start with a task common to many marketers, optimizing a web page for things like SEO or GEO. Suppose you start with a prompt like this, based on the RACE Framework from Trust Insights - role, action, context, execution.

This is a solid and robust prompt, but it’s highly specific. So the next step would be to take this prompt and turn it into something more generic that can be about any topic so that we can distill the standard operating procedure out. Let’s take this prompt and move it up to level 2 by making it more agnostic. Here’s what an SOP might look like, derived from the original prompt. This standard operating procedure is now something that can be a skill. You could put this in a Gem or a GPT or even as a skill in a system like Claude Code or Claude Cowork. And it would be good at doing this task broadly. Moving up to level 3, we’d take this SOP and turn it into a skill, something like geo-blog-post. Then in an agentic system like Claude Cowork, we’d apply the 5P Framework by Trust Insights™ to it. This project plan is something you would hand to a tool like Claude Cowork, or Google Anti-Gravity, and it would just go off and do it, and come back to you later with everything that’s done. Moving up to level 4, you would convert this into a job description. This level 4 job description is what you would put in as the DNA for an OpenClaw agent or a Claude managed agent or a Hermes agent. This plus the project plans from the previous step plus the skill you defined from level 2 are how you would create a highly effective agent that would do what you wanted it to do. As you can see from this example, we are building on each stage of the five levels. If you didn’t create a great skill at level two, or you didn’t create a great project plan at level three, then having a loose, vague job description at level four is going to create an agent that delivers unpredictable results that will waste your time and money. Beyond the difficulty of setting up a system like OpenClaw, one of the things people are experiencing with it is that the system doesn’t give reliable results, and when you look at the prompts they’re using as starting prompts, they are not job descriptions. People are treating a level four system like it’s a level one chatbot, and then they’re wondering why it’s not creating great outputs. Part 6: Wrapping UpThe technology behind today’s agentic systems isn’t necessarily new. It’s an evolution of what’s already there. That means in turn, that if we evolve how we use the systems to go from basic chats to standard operating procedures to project plans to job descriptions to strategic charters, we evolve alongside the technology. We provide it what it needs to get the job done, and we provide it the outcomes that we want it to meet. But as I said in the previous issue, you can’t skip steps. You can’t jump ahead. A martial arts teacher of mine once said, “Don’t reach for something that isn’t there”. What they meant in that context was using techniques that were outside of our repertoire, outside of what we had practiced. If you’ve never written a project plan for agentic AI, you probably should not be writing job descriptions for it. If you’ve never written a standard operating procedure for AI, you shouldn’t be creating project plans for it. And looking ahead, it is a very small leap of logic to realize that if level 4 is effectively an employee and level 5 is effectively a team or a department, then the fictional, unrealized level 6 is an entire company, and we might well name that “Done replacing you” as the logical extension of “done ahead of you”. At that imaginary level, agentic AI taking on the role of every major department in a company and running a virtual company would mean that you, as the stakeholder and originator of the idea, would provide everything necessary from levels one through five, and then let AI go off and do its thing for days or weeks or months at a time. Today’s models are capable of working on a problem in a sustained fashion for up to twelve hours at a time, according to METR and its assessment of how long a task AI can work on and the human equivalent time it takes. Newer models like GLM 5.1 and Claude Mythos are likely to extend that time well into the 24-hour period, where a model can take a very large task and autonomously work on it, coming back to us only when it’s done or it reaches critical failures. Once we see that time horizon extend into days or even weeks, then levels 5 and 6 become much more plausible. Our role in that world is to continue evolving as the stakeholder that AI answers to. But that means we have to keep up our skills and how we direct AI to match the level of sophistication that AI has and the time horizons it operates on. The biggest flaw I see in today’s use of levels 3 and up in AI is treating them like level 1 systems. Once you get into a system like Claude Code or Claude Cowork, you really shouldn’t be doing interactive chats. Instead, you should be handing off complete project plans. When you’re working with OpenClaw and other level 4 systems, you really should be handing off job descriptions, project plans, skills, etc., to the system, and it will know what to do with them. What lies ahead is a world where machines properly directed will be able to accomplish not just what a job can do, but what an entire company can do if we want to have a vital valuable role in that world these are the skills that you need to master today. How Was This Issue?Rate this week’s newsletter issue with a single click/tap. Your feedback over time helps me figure out what content to create for you. Here’s The UnsubscribeIt took me a while to find a convenient way to link it up, but here’s how to get to the unsubscribe. If you don’t see anything, here’s the text link to copy and paste: https://almosttimely.substack.com/action/disable_email Share With a Friend or ColleaguePlease share this newsletter with two other people. Send this URL to your friends/colleagues: https://www.christopherspenn.com/newsletter For enrolled subscribers on Substack, there are referral rewards if you refer 100, 200, or 300 other readers. Visit the Leaderboard here. ICYMI: In Case You Missed ItHere’s content from the last week in case things fell through the cracks:

On The TubesHere’s what debuted on my YouTube channel this week: Skill Up With ClassesThese are just a few of the classes I have available over at the Trust Insights website that you can take. PremiumFree

Advertisement: New GEO 101 CourseWhen I talk to folks like you, being recommended by AI is one of your top marketing concerns in 2026. We’ve taken everything we’ve learned from OpenAI’s documentation, Google’s technical papers, patents, sample code, plus our years of experience in generative AI to assemble a high-impact 90-minute course on GEO 101 for Marketers. In this course, you’ll learn:

This course is meant to be used. In addition to the course itself, you’ll also receive:

And best of all, this is our most affordable course yet. GEO 101 for Marketers is USD 99 and is available today. 👉 Enroll here in GEO 101 for Marketers! Get Back To Work!Folks who post jobs in the free Analytics for Marketers Slack community may have those jobs shared here, too. If you’re looking for work, check out these recent open positions, and check out the Slack group for the comprehensive list. Advertisement: My AI Book!In Almost Timeless, generative AI expert Christopher Penn provides the definitive playbook. Drawing on 18 months of in-the-trenches work and insights from thousands of real-world questions, Penn distills the noise into 48 foundational principles-durable mental models that give you a more permanent, strategic understanding of this transformative technology. In this book, you will learn to:

Stop feeling overwhelmed. Start leading with confidence. By the time you finish Almost Timeless, you won’t just know what to do; you will understand why you are doing it. And in an age of constant change, that understanding is the only real competitive advantage. 👉 Order your copy of Almost Timeless: 48 Foundation Principles of Generative AI today! How to Stay in TouchLet’s make sure we’re connected in the places it suits you best. Here’s where you can find different content:

Listen to my theme song as a new single: Advertisement: Ukraine 🇺🇦 Humanitarian FundThe war to free Ukraine continues. If you’d like to support humanitarian efforts in Ukraine, the Ukrainian government has set up a special portal, United24, to help make contributing easy. The effort to free Ukraine from Russia’s illegal invasion needs your ongoing support. 👉 Donate today to the Ukraine Humanitarian Relief Fund » Events I’ll Be AtHere are the public events where I’m speaking and attending. Say hi if you’re at an event also:

There are also private events that aren’t open to the public. If you’re an event organizer, let me help your event shine. Visit my speaking page for more details. Can’t be at an event? Stop by my private Slack group instead, Analytics for Marketers. Required DisclosuresEvents with links have purchased sponsorships in this newsletter and as a result, I receive direct financial compensation for promoting them. Advertisements in this newsletter have paid to be promoted, and as a result, I receive direct financial compensation for promoting them. My company, Trust Insights, maintains business partnerships with companies including, but not limited to, IBM, Cisco Systems, Amazon, Talkwalker, MarketingProfs, MarketMuse, Agorapulse, Hubspot, Informa, Demandbase, The Marketing AI Institute, and others. While links shared from partners are not explicit endorsements, nor do they directly financially benefit Trust Insights, a commercial relationship exists for which Trust Insights may receive indirect financial benefit, and thus I may receive indirect financial benefit from them as well. Thank YouThanks for subscribing and reading this far. I appreciate it. As always, thank you for your support, your attention, and your kindness. Please share this newsletter with two other people. See you next week, Christopher S. Penn Invite your friends and earn rewards

If you enjoy Almost Timely Newsletter, share it with your friends and earn rewards when they subscribe.

|

Comments